Unlike FP16, which typically requires special handling via techniques such as loss scaling, BF16 comes close to being a drop-in replacement for FP32 when training and running deep neural networks. Range: ~1.18e-38 … ~3.40e38 with 3 significant decimal digits. BFLOAT16 solves this, providing dynamic range identical to that of FP32. The original IEEE FP16 was not designed with deep learning applications in mind, its dynamic range is too narrow.

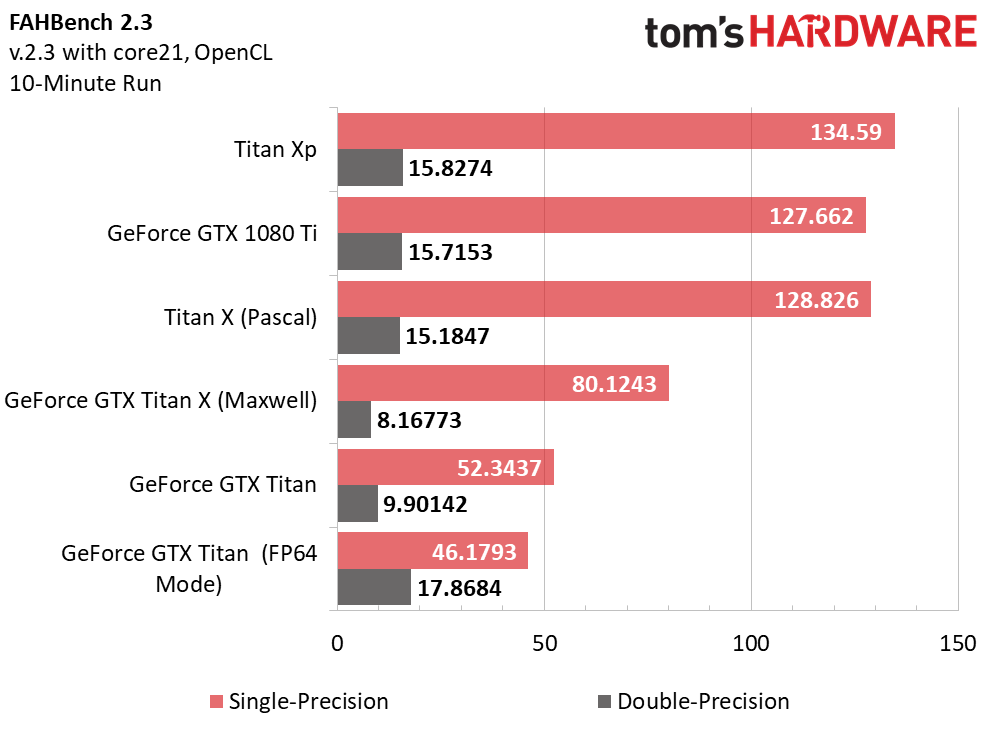

The name flows from “Google Brain”, which is an artificial intelligence research group at Google where the idea for this format was conceived. “Half Precision” 16-bit Floating Point ArithmeticĪnother 16-bit format originally developed by Google is called “ Brain Floating Point Format”, or “bfloat16” for short.Half-Precision Floating-Point, Visualized.Right now well-supported on modern GPUs, e.g. Was poorly supported on older gaming GPUs (with 1/64 performance of FP32, see the post on GPUs for more details).Not supported in x86 CPUs (as a distinct type).

Supported in TensorFlow (as tf.float16)/ PyTorch (as torch.float16 or torch.half).

Otherwise, can be used with special libraries.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed